The tech debt conversation is one of the most expensive comfort blankets in engineering leadership. It gives teams a villain, boards a budget line, and CTOs a roadmap. It is credible enough to survive a board presentation, specific enough to generate a backlog, and vague enough that nobody can really prove it isn’t working. The only problem is that some evidence suggests it won’t necessarily solve your productivity problems. We have been staring at the code when we should have been counting the handoffs and asking who owns the code base.

In 2024 researchers at the Blekinge Institute of Technology conducted an empirical study investigating whether technical debt impacts lead time in resolving backlog issues. Within a real fintech firm, the study measured technical debt identified by SonarQube against lead time in 6 components across 15,592 issues between 2022 and 2023. They found that debt levels explained between 5% and 41% of lead time variance and in two components more debt correlated with faster delivery.

So if the problem is not tech debt, or at least it’s not the biggest problem, then what is? The paper stops short at diagnosing this entirely but I think they are pointing in the right direction when they say that the confounding variables could be some combination of team size, component ownership, change size and the number of teams involved. These are all organisational issues, NOT technical.

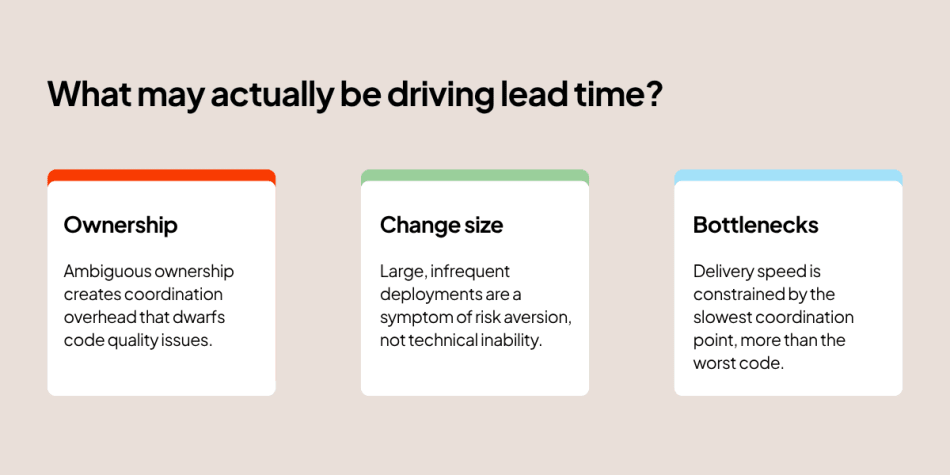

There is a lot of nuance in here and much of what is really driving lead time will be specific to your organisation, but the paper points toward three organisational variables that any engineering leader will recognise:

Ownership

Ambiguous ownership creates a huge coordination overhead that dwarfs code quality issues. Take a look at your teams. How many compliance handoffs are involved in their work, how many code bases does each team work across? Do multiple teams work on the same code base together? Are your teams in a million slack channels or multiple cross squad meetings every week? All that extra work is the result of a lack of clearly defined ownership and authority and it is driving up the cost of change.

Change size

Take a look at the size of your releases. Large infrequent deployments are a symptom of risk aversion, not technical inability. Especially for heavily regulated industries bundling releases is a response to the compliance overhead each release requires. The debt is your release process not your code base. Talk to the team, can they keep the risk profile and audit-ability the same but make the release increments smaller, the answer will be yes and your lead time will reduce.

Team bottlenecks and hand offs

Conway’s law made measurable! Delivery speed is constrained by the slowest coordination point, more than the worst code. How long are the lead times for infrastructure or devops changes in your teams? How many of the team are present in the delivery of work from inception to deployment? Count the hand offs, they are each adding days onto your lead time.

For UK based fintechs, this matters more now than ever with two pressures converging. The AI productivity narrative pushing CTOs to invest in tooling and the regulatory pipeline compressing delivery windows. Both of these are creating an urgency that is directed at the code, when it should be directed at the structures that surround it.

The fixes are simple but not easy

Measure lead time by component (code base) not by team.

Do you know which of your components have a debt-to-slowdown correlation? Most organisations don’t, that’s a problem.

Teams are organisational constructs that change, components are the stable unit of delivery. When you measure by team you’re measuring the container, not the work. The Paudel methodology is instructive here: six components, each analysed individually, each telling a different story. Two of them showed no meaningful correlation between debt and slowdown at all. You can’t see that if you’re averaging across the whole engineering function or if you are measuring teams that work across components.

The practical starting point is a value stream map, not across your whole engineering function, but scoped tightly to a single component. Map the flow of a backlog item from commit to production: every step, every handoff, every wait state. Don’t start with the biggest component or the most complex one. Start with the one where the delivery complaints are loudest.

What you are looking for is not code quality but where the clock stops. In most mature fintech environments that will be an approval gate, a shared infrastructure queue, or a moment where the work leaves one team’s hands and enters another’s. That is your real lead time driver. DORA’s 2025 research, drawing on nearly 5,000 technology professionals globally, found that teams who understand their value streams dedicate significantly more time to valuable work and that the organisations who see the greatest returns from any investment, including AI tooling, are those who can identify and act on their true constraints rather than their most visible ones.

Instrument ownership before you modernise.

If you can’t name one accountable person for a component’s production health, then refactoring it won’t help. Ownership gaps are real and structural and no tooling investment (AI or otherwise) closes them.

The most expensive refactoring projects I’ve seen fail didn’t fail because the engineering was wrong but because nobody owned the outcome. When a component has shared ownership, it has no ownership. Every architectural decision becomes a negotiation, every deployment a committee. Name the person. If you can’t, that ambiguity is costing you more than the debt.

Treat release governance as an architectural problem.

The compliance and change management overhead is a system design issue, not a process one. It belongs in scope for your modernisation programme.

Most modernisation programmes have a technical workstream and a process workstream that run in parallel and never really talk to each other. The engineers refactor the code; the risk and compliance team reviews the change management framework. Neither group is empowered to touch the other’s domain. But if your release process requires three approval gates, a CAB sign-off and a two-week notice period, the architectural question is: why? That’s not a process problem, it’s a system design problem with organisational constraints baked in as load-bearing walls. Treat it accordingly.

A provocation, not a verdict

I have been thinking about this study for a few weeks now. Initially, it confirmed a lot of what I have been seeing in industry. But, the reality is that it’s a provocation, not a verdict.

The measure of technical debt used here is SonarQube-generated remediation time, static analysis of code-level issues such as complexity violations, duplication, and known anti-patterns. There is a whole category of genuine technical debt that a motivated engineering team might legitimately prioritise, that would deliver real improvements to delivery speed, but that static analysis simply cannot see: missing integration tests that force slow manual regression cycles before each release, significant dependency version lag that blocks adoption of modern deployment tooling, undocumented internal API contracts that require cross-team coordination for every change, or infrastructure managed by hand rather than code. These are real debts with real delivery consequences. The study, by design, cannot measure them.

In the end I think this really ties back into something I have written about before, whether we effectively factor in the social component of the systems we build. As engineering leaders, we are always tempted to make the code the problem. Change there is easier to identify, easier to package into a roadmap, and considerably easier to explain to a board than ‘we need to restructure how sixteen teams coordinate around four components.’ Technical debt reduction programmes get approved because they feel concrete. Organisational debt doesn’t have a SonarQube dashboard.

The study is imperfect. But the direction it points is one most of us already know and quietly avoid.

Authors

Related

Article • 17 March 2026

De-risking UK pension mergers with AI-powered entity matching

Article • 13 March 2026

The greatly exaggerated death of T&M

Article • 06 March 2026

Psychological safety is rocket fuel for delivering business value

Article • 24 February 2026

Workplace pensions – the tech-enabled accumulation, retention and decumulation drivers