As designers and product people, we’re obsessed with removing friction to create smooth, effortless experiences. With “As few clicks as possible” as our battle cry, we’ve honed the craft of identifying and erasing friction.

Despite clicks giving way to touch, tap, swipe, and even voice, maximising efficiency and reducing the number of interactions for a user to achieve a goal or complete a job is still UX nirvana. But should it be?

While the logic of reduced clicks is perfectly sound when designing transactional digital products, it falls short when you step outside the UX world and into a more natural human environment.

Let’s face it: you can’t build a lasting relationship with a future life partner, rekindle ties with a long-lost family member, or build mutual trust with your rescue dog by minimising interactions. Instead, the inherently human approach of fostering meaningful, valuable, regular interactions over time remains the gold standard.

AI is Evolving Design

At Waracle, we’re exploring the perspective that fewer clicks are better in a transactional context. But, in scenarios where the journey, the trust and the relationship hold value, the opposite is often true.

Why is this relevant? Breakthroughs in generative AI mean we can now design and build experiences that behave more like humans—they can problem-solve, learn and evolve with user needs. They can even simulate empathy to build trust and create relationships.

Let’s be clear: we’re not talking about “designed” behaviour where teams create complicated decision trees at design-time that map limited user inputs to predetermined responses — the software industry has been faking “intelligence” that way since the 1950s. We’re talking about the new and genuine ability for software-based products to operate with autonomy and judgement at run-time in collaboration with users.

(N.B. Designing and building experiences with AI requires a significant shift in how businesses and product teams spec, design, build and test software — more on that topic later.))

The Blurred Line Between Product and Peer

The opportunity to build digital relationships opens a new front in the battle for consumer spending. We’re moving from a transactional, attention-based economy, where companies battle to grab and monetise our eyes, towards a world where companies race to establish long-term, high-value, meaningful digital relationships.

For example, in an article titled “The Man of Your Dreams For $300”, New York Magazine, The Cut, explores the intricate relationship dynamics between humans and AI chatbots, highlighting how Replika, an AI companion app, allows users to create AI partners. Perhaps surprisingly, half the app’s users—and their fastest-growing segment—are women who flock to the platform for the promise of safe, judgement-free relationships.

People are willing to build relationships with Replika’s intelligent software because it gives them control, lacks personal baggage, and offers unwavering emotional support. Unlike human relationships with inherent complications, these AI companions are perceived as a ‘blank slate’, showing the promise of malleable, baggage-free interactions.

This isn’t a novel concept. The ELIZA effect, derived from an early chatbot that simulated a psychotherapist, showed how simple text interactions could evoke deep, emotional responses. The evolution from ELIZA to today’s AI companions highlights the potential for deeper, more meaningful AI-human interactions and collaborations.

Hollywood, unsurprisingly, has been entertaining us with such storylines for years; the emerging bond between humans and AI companions is reminiscent of the intimate dynamics portrayed in the movie “Her”. The difference now is that the technology to do this has arrived.

As AI continues to evolve and become more nuanced in its capabilities, it’s apparent that the distinction between product and peer is blurring, prompting us to re-evaluate the nature of relationships in the digital age. AI’s capacity to mimic genuine human interaction means users are not just interacting with an algorithm but forming connections with a reflection of themselves, underscoring the profound impact of AI on human emotions and relationships.

Digital Relationships in Transactional Environments

But what does this mean in a more transactional environment? Take, for example, a financial services brand. Most consumers don’t have access to personal financial advisers. According to the UK’s Financial Conduct Authority (FCA), 24% of adults have low confidence in managing their money, and 38% rate their financial knowledge as low. However, AI-enabled services are emerging to fill this gap.

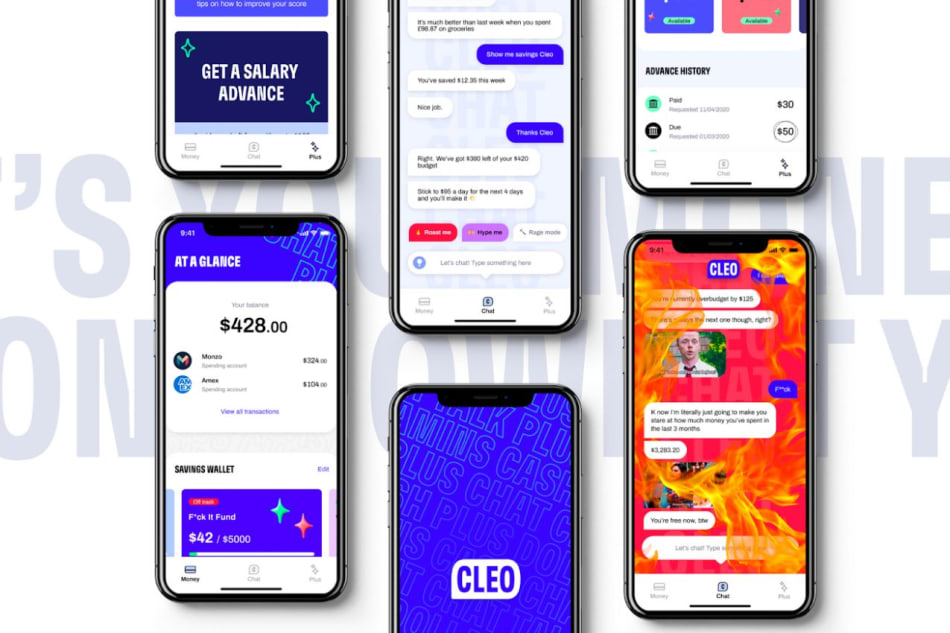

Apps like Wally, Cleo, and Plum offer features like tracking finances across various accounts, predicting expenses, creating spending plans, and automatically saving money. They can suggest hyper-personalised saving strategies based on user goals and compare spending habits with peers.

Most importantly, they build trusted relationships via an engaging, human-esque chat. In the case of Cleo, it will even go as far as giving you a hard time for spending too much on McDonalds!

As intelligent products evolve, so must the ways we think and design them. What must we do differently if the days of hyper-optimised transactional flows are gone? One thing is obvious: we must move from designing flows to nurturing relationships. Thankfully, decades of research exist around how we measure and build relationships. There are even mathematical formulas for predicting if we’ll like a person based on the number of interactions.

For example, from the research of John Gottman, we know we require a ratio of 20 to 1 positive to negative interactions to have a positive perception of the relationship. While this only scratches the surface of relationship psychology, it’s easy to see how we would want to apply these formulas to product design and development.

Understanding how we form, build and measure relationships is now as vital a skill set as visual design principles. We need to understand and define what is considered “value” in these relationships, how we ensure we deliver it, how we make the journey to it fulfilling and how we redesign our design processes to enable that to happen.

Ethical Implications of AI-Enabled Relationships

The immense power of automating and digitising relationships raises serious ethical considerations. The recent lawsuit against Meta by 33 US states, spotlighting youth mental health issues on platforms like Instagram, drives this home from both the human and commercial perspectives. We need ethical guidelines and design principles to safeguard user interests and ensure transparency as we integrate AI into the digital landscape. Tristan Haris and Aza Raskin from The Centre for Humane Technology have spoken extensively on the AI dilemma and the new responsibilities it bestows upon the designers and creators of such products.

Conclusion: A New Era of AI-Augmented Relationships

The evolution of AI and its potential to simulate human interactions has ushered in a new product and user experience design era. No longer are we simply streamlining transactional processes; we’re sculpting meaningful, valuable, and lasting relationships. As AI blurs the line between product and user, we are redefining the nature of digital interactions. Though brimming with promise, this evolution comes with ethical responsibilities, ensuring that AI-augmented relationships are transparent, trustworthy, and beneficial.

If you are interested in continuing the discussion, why not reach out to Gary & Kev and connect to discuss how we can co-create intelligent digital experiences that build long-term, ethical relationships with your users.

Authors

Related

Article • 01 June 2026

Statistical pitfalls in measuring AI

Article • 29 May 2026

The shape of a shifting landscape – Introducing the Waracle100

Article • 26 May 2026

Testing is not where quality lives anymore